Llms Txt Guide Ai Search Visibility

Your site can rank at position 1 in Google and be nearly invisible to ChatGPT, Perplexity, and Claude. The reason is structural: AI language models do not read your website the way Google does. They access content on demand, work within limited context windows, struggle with JavaScript-heavy pages, and miss important content that is not clearly linked or easily readable. llms.txt is the file that solves this problem.

Proposed in September 2024 by Jeremy Howard of Answer.AI and published at llmstxt.org, llms.txt is a plain Markdown file placed at the root of your website. It gives AI systems a curated, human-readable guide to your most important content so they do not have to guess what matters on your site. By April 2026, Anthropic, Stripe, Zapier, Cloudflare, Vercel, and Hugging Face have all implemented it. Only 5 to 15% of websites have one. That gap is the opportunity.

This guide explains what llms.txt is, how it differs from robots.txt and your sitemap, which frameworks make it easy to generate automatically, the exact format to use, and how to write yours in 30 minutes. The free Claude SEO audit tool on this site already checks for llms.txt automatically as one of its 27 signals. If your site failed that check, this guide is where you fix it.

What This Guide Covers

- Key Takeaways

- What llms.txt Actually Is

- The 3-File AI Discovery Stack

- Why llms.txt Exists: The AI Retrieval Problem

- Who Is Already Using It

- The Exact llms.txt Format

- llms.txt vs llms-full.txt: Which Do You Need

- Framework Support: WordPress, Yoast, Webflow, Next.js

- How to Write Yours in 30 Minutes

- The Honest Assessment: What It Does and Does Not Do

- Where llms.txt Fits in Your Full GEO Stack

- Frequently Asked Questions

Key Takeaways

- llms.txt is a plain Markdown file at your site root that gives AI language models a curated guide to your most important content. It does not affect Google rankings. It is designed entirely for AI retrieval systems.

- Only 5 to 15% of websites have implemented llms.txt as of early 2026, according to lowtouch.ai research. That adoption gap is the first-mover opportunity: sites that implement now are forward-compatible when AI platforms formalize support.

- Anthropic, Stripe, Zapier, Cloudflare, Vercel, Hugging Face, and a growing list of developer-tool companies have already implemented it. No major AI platform has officially confirmed automatic reading of llms.txt as a first-class input yet, but developer-facing tools including Cursor, GitHub Copilot, and RAG frameworks do read it when present.

- WordPress users can generate llms.txt automatically using Yoast SEO. Webflow provides a system to upload it directly to your root. Next.js, Mintlify, and Astro all have community plugins or built-in support.

- Creating a basic llms.txt takes under 30 minutes for most sites. The effort-to-potential-impact ratio is higher than any other single AI visibility action I have documented in two years of GEO work.

What llms.txt Actually Is

llms.txt is a plain-text file written in Markdown format, hosted at the root of your website at yourdomain.com/llms.txt. It provides a structured, concise summary of what your site contains and where the most important content lives, written specifically for AI language models rather than for human readers or traditional search crawlers.

The simplest way to understand it is through an analogy. If your site were a physical library: your sitemap.xml is the complete card catalogue (every book listed), your robots.txt marks the restricted shelves (what crawlers cannot access), and your llms.txt is the librarian's recommended reading list (the curated shortlist of what matters most for a specific visitor's needs).

Unlike a sitemap, llms.txt is written for machines that read prose rather than for crawlers that follow links. Unlike robots.txt, it does not grant or deny access. It recommends priority. Unlike schema.org markup, it sits outside individual pages as a single domain-wide index that any AI system can read in one request rather than crawling page by page.

The file was proposed in September 2024 by Jeremy Howard, co-founder of Answer.AI, and the official specification is maintained at llmstxt.org. The naming convention is strict: the file must be named llms.txt, not llm.txt or llms-txt.txt, to ensure cross-platform AI discoverability.

The 3-File AI Discovery Stack

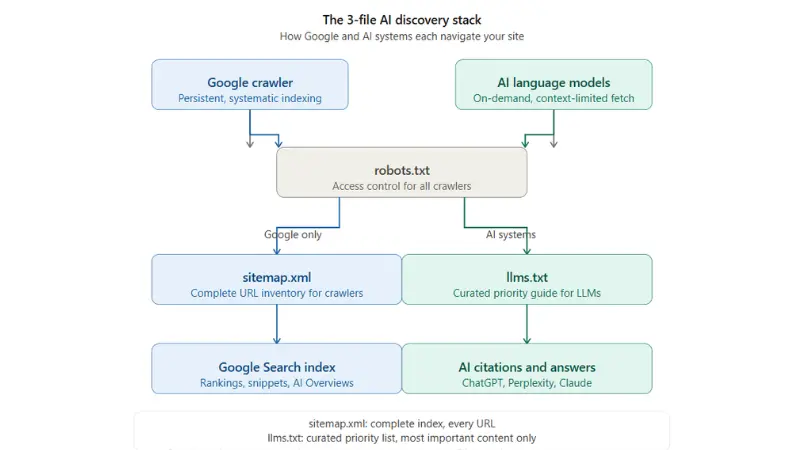

Understanding where llms.txt fits requires seeing all three files together. The diagram below shows how Google's crawler, AI retrieval systems, and language models each use a different file to understand your site.

The key distinction the diagram shows: Google's crawler reads robots.txt and sitemap.xml, then processes your HTML. AI systems like ChatGPT, Perplexity, and Claude also check robots.txt for access permissions, but they have a fundamentally different problem when they reach your content. HTML pages are built for human readers with navigation, ads, JavaScript rendering, and visual layouts. None of that structure helps an AI model extract the information it needs to answer a query. llms.txt solves this by providing a clean, pre-organized summary that skips the noise entirely.

Why llms.txt Exists: The AI Retrieval Problem

Traditional search crawlers work through systematic, persistent indexing. Googlebot visits your site on a regular schedule, stores what it finds in a massive index, and retrieves from that index when someone searches. The process is designed for completeness: Google tries to index everything accessible on your site over time.

AI language models work entirely differently. When a user asks ChatGPT or Perplexity a question, the system performs an on-demand retrieval of relevant web content at the moment of the query. It has no persistent memory of your site from previous visits. It works within limited context windows, which means it can only process a specific amount of content per retrieval request. And it struggles with several common web page structures that Google handles without difficulty.

The specific problems AI retrieval systems encounter on standard websites are: JavaScript-rendered content that requires a browser to render before the text is accessible, complex HTML navigation and layout elements that consume context window space without providing useful information, pages that are important but poorly linked internally (the AI has no way to discover them), and the absence of any signal about which content is most relevant to a given query type.

A site with 500 pages and an excellent sitemap gives Google everything it needs. The same site gives an AI retrieval system a navigation problem: which of the 500 pages is most relevant to the query at hand? Without llms.txt, the AI has to guess based on its training data and whatever it can retrieve in the moment. With llms.txt, you tell it directly.

Who Is Already Using It

The adoption pattern as of April 2026 is revealing. Companies that implemented llms.txt first were primarily developer-tool companies whose target audience uses AI coding assistants: Anthropic itself (Claude's documentation), Vercel (Next.js documentation), Stripe (API documentation), Cloudflare (developer documentation), Zapier (integration documentation), and Hugging Face (model documentation). These companies benefit most immediately from llms.txt because their users regularly ask AI tools about their documentation, and accurate AI answers are a direct product support benefit.

The second wave of adoption is now underway among marketing websites, SaaS companies, and content publishers. Yoast SEO generating llms.txt automatically for WordPress sites is the signal that mainstream adoption has begun. When a plugin with millions of active installations includes a feature by default, that feature moves from early adopter to standard practice within 12 to 18 months.

For your specific situation: if your target clients use ChatGPT or Perplexity to research services in your category, the companies in your competitive set that implement llms.txt are giving AI systems a cleaner, more authoritative summary of their offering than your site currently provides. That is a citation and recommendation gap that is straightforward to close.

The Exact llms.txt Format

The official specification requires Markdown formatting with a specific structure. The file has three required sections and two optional ones. Here is the complete format with an explanation of each section.

Required Section 1: The H1 Title

The first line of the file is an H1 heading with your site or company name. This is what AI systems use to identify whose content the file is describing.

# Kulbhushan Pareek , SEO and AI Marketing Consultant

Required Section 2: The Blockquote Description

Immediately below the H1, a blockquote provides a 2 to 3 sentence summary of what the site or company does. This is the context paragraph that frames everything below it.

> Digital marketing consultant with 13+ years helping > US, UK, and European businesses build organic search > visibility and AI search citation presence. Services > cover SEO consulting, GEO optimization, AI marketing > automation, and lead generation. Verified results: > $385,091 organic revenue for a US B2B client in 18 months.

Required Section 3: H2 Sections With Links

The body of the file uses H2 headings to organize content into logical categories, with each important page listed as a Markdown link with a brief description. The description after the link tells the AI what that page is about and when it is relevant.

## Services - [SEO Consulting](https://kulbhushanpareek.com/services/seo-consulting/): Full-service SEO strategy and execution for US, UK, and European B2B companies. Technical audits, keyword research, content planning, link building, monthly GSC reporting. - [AI Marketing Automation](https://kulbhushanpareek.com/services/ai-automation/): AI-powered marketing workflows replacing manual processes. Content production systems, automated reporting, competitor monitoring. - [GEO Optimization](https://kulbhushanpareek.com/blog/geo-aeo-optimization-the-complete-guide): Strategy for getting cited in ChatGPT, Perplexity, and Google AI Mode. GEO content restructuring, schema implementation, platform presence building. ## Case Studies - [Indigo Software SEO Campaign](https://kulbhushanpareek.com/blog/indigo-software-seo-case-study-385k-organic-revenue): 18-month B2B SEO and AI visibility campaign. $385,091 verified organic revenue, 482% traffic growth, 369 AI-cited pages. ## Key Guides - [47 Claude SEO Prompts](https://kulbhushanpareek.com/blog/47-claude-ai-seo-prompts-that-fix-every-seo-problem): Complete library of copy-paste Claude prompts for every SEO task including audits, keyword research, schema, and GEO optimization. - [LLM Citation Strategy](https://kulbhushanpareek.com/blog/build-backlinks-llm-ai-citation-strategy): 5-method approach to building backlinks and platform presence that earns citations in ChatGPT, Perplexity, and Google AI Overviews. - [Technical SEO Audit Checklist](https://kulbhushanpareek.com/blog/claude-technical-seo-audit-checklist): 12-point technical SEO checklist using Claude and free tools in 30 minutes. Includes Check 12 for AI crawler accessibility. ## Tools - [Free Claude SEO Audit](https://kulbhushanpareek.com/tools/claude-seo-audit): Free 27-check SEO and GEO audit tool. Checks AI crawler access, llms.txt presence, and author schema alongside 24 classic SEO signals. ## Contact - [Book a Free Strategy Call](https://kulbhushanpareek.com/book-meeting): Free 30-minute review of your SEO and AI search visibility. - [Email](mailto:hello@kulbhushanpareek.com): Direct contact.

Optional Section: Notes on Sensitive or Excluded Content

You can add a final section noting what the llms.txt file does not cover and why. This is useful for sites with private documentation or content you want AI systems to handle carefully.

## Notes This file covers public-facing content only. Client reports and campaign data are confidential and not linked here.

Linking to Markdown Versions of Pages

The official specification recommends linking to Markdown versions of pages (ending in .md) rather than the HTML URLs where possible. Markdown files strip navigation, ads, and JavaScript and give AI systems clean, token-efficient content. If your CMS generates Markdown versions of posts automatically (Mintlify, Ghost, and some Hugo configurations do), use those URLs. If not, your HTML URLs work fine. The specification permits both.

llms.txt vs llms-full.txt: Which Do You Need

The llms.txt specification includes two distinct files with different functions.

llms.txt is the curated index: a file with links and short descriptions pointing to your most important pages. It is typically short, between 200 and 2,000 words, and gives AI systems the map to your site. This is the file this guide has been describing. Almost every site should have this.

llms-full.txt is the complete content dump: a single Markdown file containing the full text of every page on your site flattened into one document. One site documented on Search Engine Land maintained an llms-full.txt file that was 115,378 words and 966KB. This format gives AI systems the entire content of a site in one request, eliminating the need to follow individual links. It was developed by Mintlify in collaboration with Anthropic and subsequently included in the official llms.txt proposal.

Who needs llms-full.txt: developer documentation sites where AI coding assistants need comprehensive API reference, platforms like Cursor and GitHub Copilot that fetch llms-full.txt to provide complete library context, and any site where the AI system needs to answer detailed technical questions from the full content body rather than just navigate to the right page.

Who needs only llms.txt: marketing websites, consulting sites, e-commerce sites, and blogs. For these sites, the curated index approach of standard llms.txt provides the right level of guidance without the server overhead of maintaining a massive single-file content dump.

For kulbhushanpareek.com and sites with similar structures, standard llms.txt is the correct choice. Create llms-full.txt only if you have developer documentation or a deep technical content library where AI assistants need full content access.

Framework Support: WordPress, Yoast, Webflow, Next.js

The framework support landscape for llms.txt has developed rapidly since early 2026. Here is the current status for the most common platforms.

WordPress with Yoast SEO

Yoast SEO now generates llms.txt automatically for WordPress sites. The file is created from your published posts and pages, with your most recently updated content prioritized. Enable it in the Yoast dashboard under the SEO settings. The Yoast-generated file includes curated high-priority URLs, your latest blog posts, key documentation and important pages most relevant to LLMs, and optional link titles for clarity to help AI tools interpret page intent.

If you use Rank Math (which this site uses), a similar plugin is available from the Rank Math ecosystem. Check the Rank Math plugin directory for the current llms.txt module, as the feature set was being actively developed at publication time. As an alternative, the manual creation approach below works perfectly alongside Rank Math's existing SEO functions.

Webflow

Webflow provides a native system to upload llms.txt directly to your root directory via the site settings. No custom code or developer involvement is required. Go to Site Settings, Publishing, and look for the File Manager or Custom Files section. Upload your llms.txt file there and it will be served at yourdomain.com/llms.txt automatically.

Next.js

For Next.js sites, create a static file at public/llms.txt and it will be served at the root automatically. For dynamically generated llms.txt that updates as you add new content, create a route handler at app/llms.txt/route.ts that generates the file content from your CMS or content layer. The Vercel documentation at vercel.com/llms.txt shows their own implementation as a reference.

Mintlify

Mintlify generates both llms.txt and llms-full.txt automatically on every deploy. If you use Mintlify for documentation, your files are already being created. Check yourdomain.com/llms.txt to confirm they are live.

Hugo, Jekyll, and Static Site Generators

For static site generators, create a template file in your layouts directory that generates llms.txt from your content front matter. Hugo users can add llms.txt to their static directory for a manually maintained file or create a custom output format in config.toml for automatic generation. The community has published templates for both approaches in the Hugo forums.

PHP Sites (Custom CMS like kulbhushanpareek.com)

For PHP sites with custom CMS structures, the simplest approach is a static llms.txt file maintained manually and updated monthly or whenever major new content is published. Create the file using any text editor, upload it to your site root (public_html/llms.txt), and verify it is accessible at yourdomain.com/llms.txt. This site uses this approach. The manual maintenance takes 5 minutes per update.

How to Write Yours in 30 Minutes

This is the step-by-step process for creating a complete, well-structured llms.txt file for most consulting, marketing, SaaS, or content sites. Total time: 25 to 35 minutes.

Step 1: Open a Text Editor (2 minutes)

Use any plain text editor. Notepad on Windows, TextEdit on Mac (set to plain text mode, not rich text), VS Code, or any code editor. Do not use Microsoft Word or Google Docs unless you save as plain text, as rich text editors add formatting characters that corrupt Markdown.

Step 2: Write the H1 and Blockquote (5 minutes)

Your H1 is your business or personal brand name. Your blockquote is a 2 to 3 sentence description that answers: what do you do, who do you serve, and what is the one most important result or proof point. Keep the blockquote factual and specific. Avoid marketing language like "best in class" or "industry-leading." Write as if briefing a researcher, not selling to a prospect.

Step 3: List Your Most Important Pages by Category (15 minutes)

Create H2 sections for each major content category: Services, Case Studies, Key Guides or Resources, Tools if applicable, and Contact. Under each H2, add a Markdown link for each important page with a one to two sentence description. The description should answer what the page covers and who it is for, not repeat the page title.

Rules for page selection: include every service page, every case study, your 5 to 10 highest-traffic or highest-value blog posts, any free tools or resources, and your contact and booking pages. Do not include every blog post. The purpose is curation, not comprehensiveness. AI systems benefit from a prioritized list, not an exhaustive inventory. Your sitemap already handles the exhaustive inventory.

Step 4: Add the Notes Section (3 minutes)

Add a brief Notes section if your site has content you want AI systems to understand is excluded. For most sites this is private client data, confidential reports, or draft content. One or two sentences is sufficient.

Step 5: Save as Plain Text and Upload (5 minutes)

Save the file as llms.txt (not llms.md or llms.txt.txt). Upload it to your site root via FTP, SFTP, or your hosting control panel's file manager. For cPanel-based hosting (like Hostinger VPS), navigate to File Manager, go to public_html, and upload the file directly there.

Step 6: Verify and Submit (5 minutes)

Open a new browser tab and go to yourdomain.com/llms.txt. You should see your plain Markdown text rendered in the browser. If you see a 404 error, the file is not in the correct directory. If you see formatted text (bold, headers visually rendered), the file is being served correctly and the browser is interpreting the Markdown, which is fine.

After verifying, go to Google Search Console, use URL Inspection on yourdomain.com/llms.txt, and click Request Indexing. Do the same in Bing Webmaster Tools. Also submit the URL to your XML sitemap as an additional page so it gets discovered consistently.

Step 7: Run the Free Audit to Confirm (2 minutes)

Go to the free Claude SEO audit tool and run your site. The llms.txt check in the audit will now show as passed. If it still shows as failed, clear your browser cache and run again, or check that the file is genuinely accessible at the root URL (not in a subdirectory).

The Honest Assessment: What It Does and Does Not Do

Every guide on llms.txt should include an honest section on what is confirmed versus what is speculative. The research is clear, and practitioners deserve the full picture rather than inflated promises.

What Is Confirmed

Developer-facing AI tools do read llms.txt. Cursor, GitHub Copilot, Continue, Aider, and various RAG (Retrieval-Augmented Generation) frameworks fetch and use llms.txt when it is present. For sites with a developer audience, this is an active and verifiable benefit.

AI retrieval pipelines can be prompted to fetch it. When users or developers ask ChatGPT, Claude, or Perplexity to read your llms.txt as part of a workflow or research task, the file provides structured, clean information that those systems would otherwise have to scrape from your HTML pages. This is a practical user-experience benefit for anyone using AI assistants to research your site.

It provides a clean content signal. Even if no major AI platform reads it automatically yet, llms.txt creates a clean, token-efficient summary of your site that reduces the noise-to-signal ratio when an AI system does encounter your content. Sites with llms.txt are forward-compatible for when platform support formalizes.

What Is Speculative or Unconfirmed

No major AI platform has officially confirmed automatic reading of llms.txt as a first-class input. As of April 2026, Anthropic, OpenAI, and Perplexity have not published statements committing to read it automatically. Google's John Mueller has noted that major crawlers do not currently prioritize these files over standard HTML. Semrush research found no statistical correlation between implementing llms.txt and improved performance in AI results in their controlled study.

The absence of confirmation is not evidence of no value. Web standards typically follow the pattern that sites publish first and platform support formalizes after adoption crosses a threshold. Robots.txt existed as a convention before any search engine officially committed to respecting it. The adoption curve for llms.txt is tracking a similar pattern, with developer tool adoption leading and AI platform adoption following.

The honest conclusion: llms.txt is a low-effort, low-cost forward investment. Creating it takes 30 minutes. It provides confirmed value for developer tool AI workflows today and positions you for the emerging mainstream AI platform support. If it never becomes a universally consumed standard, you have a clean, text-only summary of your brand that any AI agent can easily read when prompted. That has independent value regardless of automatic adoption.

Where llms.txt Fits in Your Full GEO Stack

llms.txt is one technical signal in a broader AI search visibility strategy. Understanding where it fits prevents the mistake of treating it as a complete GEO solution rather than one layer of a multi-signal approach.

The GEO stack has five layers, each addressing a different aspect of how AI systems discover, evaluate, and cite your content. llms.txt addresses discovery: helping AI systems find and understand your content. The other four layers address content structure (GEO-ready formatting with direct-answer openings and named statistics), technical signals (schema markup, AI crawler access in robots.txt, Bing Webmaster Tools verification), entity authority (LinkedIn publishing, Reddit presence, platform citations on G2 and Clutch), and brand mentions (expert publication placements, HARO responses, earned citations). All five layers are required for consistent AI citation frequency. llms.txt alone does not produce citations. It removes one technical friction point in the path to being cited.

For the complete GEO implementation guide covering all five layers, the GEO and AEO optimization guide covers the full technical and content approach. For building the platform presence that produces AI citations at scale, the LLM citation and backlink strategy guide covers the complete 5-method approach with verified campaign results.

The technical foundation layer (which llms.txt is part of) also includes the AI crawler accessibility check from the 12-point technical SEO audit checklist. If your robots.txt is not explicitly allowing ChatGPT-User, PerplexityBot, and ClaudeBot, fix that alongside creating your llms.txt file. Both changes take under 30 minutes combined and together address the two most commonly missed technical AI visibility prerequisites.

For the consulting service that implements the full GEO stack as an end-to-end engagement, the SEO consulting service covers GEO optimization as a core component of every engagement since the AI search shift became measurable in campaign data. The verified results from implementing this full stack are documented in the Indigo Software case study: 369 AI-cited pages, 534 brand mentions, and $385,091 in organic revenue attributed over 18 months.

Leave a Comment

Your email address will not be published. Comments are moderated before appearing.