Why Did My Website Traffic Drop

Your Google Analytics shows a drop. Maybe it happened suddenly last week. Maybe it has been a slow slide over the past three months. Either way, you are looking at the numbers and trying to figure out what went wrong. Working with an SEO expert who can show you proven campaign results makes it easier to diagnose the problem and take the right steps forward. Read more about grow your business online. Read more about B2B lead generation engine.

Here is the most important thing to understand before diagnosing anything: a website traffic drop in 2026 has different causes than a traffic drop in 2022. The search landscape changed fundamentally when Google introduced AI Overviews at scale, when AI Mode rolled out broadly, and when buyers started using ChatGPT and Perplexity as search tools rather than just AI assistants. If you are applying a 2022 diagnostic framework to a 2026 traffic drop, you will likely misidentify the cause and apply the wrong fix.

This guide covers every real cause of a website traffic drop in the current environment, with a specific diagnostic and fix for each one. By the end, you will know exactly what happened to your traffic and what to do about it.

Before You Diagnose: Is the Drop Real?

The first question before investigating any traffic drop is whether the data you are looking at is accurate. A significant percentage of reported traffic drops are not real drops in website visits. They are measurement problems. Fixing a measurement problem takes 20 minutes. Trying to fix a real traffic drop based on false data wastes weeks.

Three things to check before assuming the drop is real

Check whether your GA4 tag is still firing correctly. Go to Google Analytics 4, open the Realtime report, and visit your site in a separate browser window. If you do not see yourself in the Realtime report within 30 seconds, your tracking tag is broken or blocked. A tag that stopped working on a specific date will show a traffic drop from that date onward, even if your actual visits never changed.

Check whether you recently installed or changed a consent banner. Many cookie consent tools block Google Analytics from firing until a user actively accepts cookies. If you added or updated a consent banner, you may have reduced your measured traffic even while your actual traffic stayed the same or grew. This is a measurement reduction, not a real drop.

Check Google Search Console, not just GA4. GA4 can be misconfigured. Google Search Console shows you the clicks and impressions Google recorded directly from its own search results. If GSC shows clicks are flat but GA4 shows a drop, the issue is tracking, not traffic. If both show a drop, the traffic itself changed. Open GSC, go to Performance, set your date range to compare the last 28 days against the previous 28 days, and look at Total Clicks. That number is definitive.

If both GA4 and GSC show a genuine drop, move to the diagnostic reasons below.

Reason 1: Google AI Overviews Are Absorbing Your Clicks

This is the most common reason for a website traffic drop in 2026, and the one that most site owners misidentify as something they did wrong.

Google AI Overviews are AI-generated summaries that appear above organic search results for an increasing share of queries. When an AI Overview is present, it answers the user's question directly on the results page. Many users read the answer and move on without clicking any organic result at all. The click-through rate for the number one organic result drops from approximately 15% to 8% when an AI Overview is present for that query. Source: Pew Research Center, July 2025. For informational content, that is essentially a halving of the traffic you would otherwise receive from ranking in position one.

By early 2026, AI Overviews appear on roughly 47% of commercial queries according to BrightEdge research. For informational queries, the rate is even higher. If a significant portion of your traffic came from informational content, how-to guides, definition pages, or FAQ content, you have almost certainly been affected by AI Overview click absorption regardless of whether your rankings changed.

How to diagnose this cause

Open Google Search Console. Go to Performance. Filter by your top pages and look at the impression and click data for your highest-traffic informational pages. If you see impressions staying flat or growing while clicks dropped, that is the signature of AI Overview click absorption. You are still visible in search. People are seeing your content. They are just not clicking through because the AI answered the question above your result.

The fix

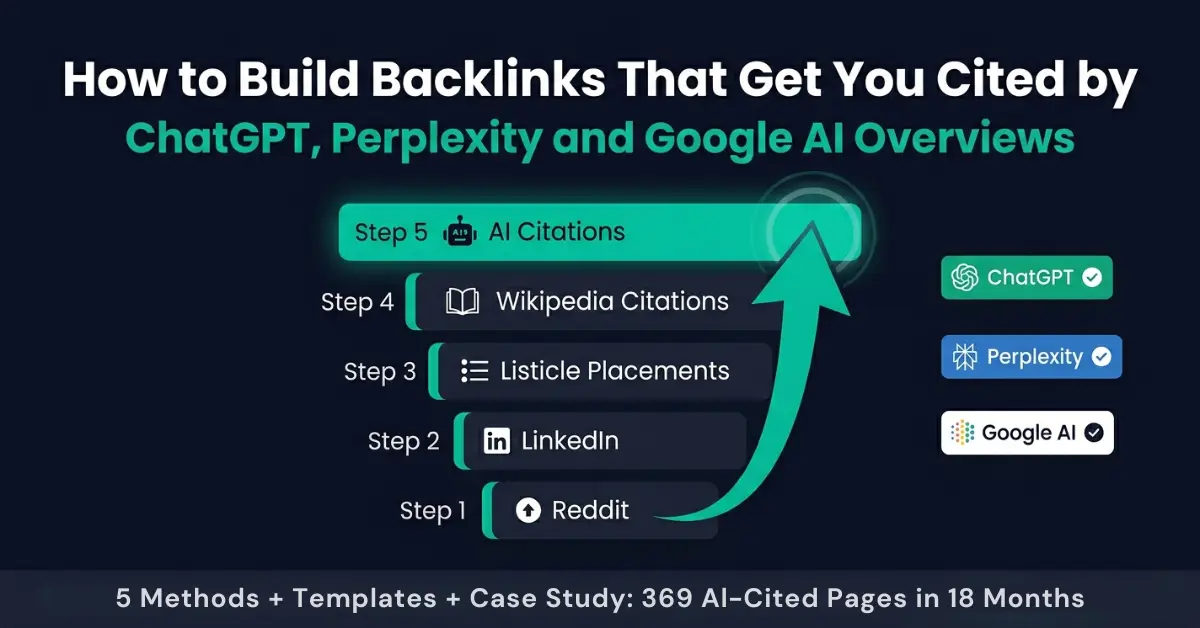

The fix for AI Overview click absorption is not to abandon your rankings. It is to get your content cited inside the AI Overview itself, so that users who read the AI answer see your brand as the source. When your content is cited in an AI Overview, you receive both the organic click from users who scroll past the AI answer and the association between your brand and the answer for users who do not click through.

The step-by-step guide for getting your content cited in Google AI Overviews is covered in the companion post, How to Get Cited in Google AI Overviews. The short version: answer-first content structure, named statistics with verifiable sources, FAQPage schema markup, and consistent entity authority signals across your site.

Reason 2: Google AI Mode Changed What Content Ranks

Google AI Mode is a more recent and more significant change than AI Overviews. AI Mode presents an AI-generated conversational response as the primary interface for a growing share of queries, rather than a list of links with an AI summary above them. In AI Mode, the way Google evaluates and surfaces content is different from traditional search ranking.

AI Mode favors content that is structured as direct answers to specific questions, that covers a topic with sufficient depth and breadth to demonstrate genuine expertise, and that has consistent entity recognition across the web. Thin content that ranked through keyword optimization alone but lacks real depth is significantly more vulnerable in AI Mode than in traditional search.

If your traffic drop is concentrated on a specific category of content, particularly informational pages that were ranking for single keywords without providing comprehensive coverage of the topic, AI Mode's shift in quality evaluation is likely a contributing factor.

The fix

The detailed strategy for adapting to Google AI Mode is covered in the Google AI Mode SEO strategy guide, which covers exactly what changed and what to prioritize. The core action is restructuring your most important pages to provide genuine topical depth, with direct-answer opening paragraphs for each section, sourced statistics, and the entity signals that AI Mode uses to evaluate expertise.

Reason 3: A Core Algorithm Update Demoted Your Pages

Google runs hundreds of algorithm updates per year, but core updates are significant ranking changes that can demote entire categories of sites or content types. If your traffic dropped sharply on a specific date and then stabilized at the lower level, a core update is likely involved.

How to diagnose this cause

First, identify the exact date your traffic dropped by looking at the GSC Performance report day by day. Then check whether Google announced a core algorithm update around that date. Google publishes core update announcements on the Google Search Central Blog and the Search Liaison Twitter account. Tools like Semrush Sensor and Mozcast track SERP volatility and can confirm whether widespread ranking changes happened around your drop date.

If your drop date coincides with a confirmed core update, you need to identify which pages dropped and what they have in common. Go to GSC, set the date range to compare before and after the update, sort pages by click change, and look at the pages that lost the most traffic. Are they thin pages? Older content that has not been updated? Pages without author information or clear expertise signals? The pattern will tell you what Google's update targeted.

The fix

Core update recovery requires improving the quality signals on your affected pages. This typically means adding genuine expertise and experience signals to your content (author credentials, first-hand experience, specific examples, and data), removing or consolidating thin pages that add volume without adding value, and improving the depth of your most important pages to match what the post-update SERP shows is rewarded.

Recovery from a core update usually takes one to three months of consistent work, with results typically visible after the next core update cycle when Google re-evaluates the affected content. There is no shortcut. The work is in genuinely improving the content quality, not in technical manipulation.

Reason 4: Your Content Is Now Thin by 2026 Standards

Google's definition of thin content has evolved significantly. In 2022, a 600-word article targeting a specific keyword with the right on-page signals could rank well. In 2026, that same article is competing against comprehensive guides, original research, and AI-generated content that covers every angle of a topic simultaneously. Content that was competitive two years ago may now be too thin to maintain its rankings even without a specific algorithm update targeting it.

Thin content in 2026 means content that ranks for a keyword but does not provide genuine, specific answers to the full range of questions a searcher on that topic would have. It also means content without clear author expertise signals, without first-hand experience, without named data sources, and without the entity relationships that tell Google you are a genuine authority in your space.

How to diagnose this cause

Take your top 5 pages that lost the most traffic. Open each page and honestly compare it to the top 3 results currently ranking for its primary keyword. Ask yourself: does your page cover everything those pages cover, and more? Does it have a named author with visible credentials? Does it cite specific data from named sources? Does it include FAQs that match what the People Also Ask section shows? If the answer is no to any of these, you have a thin content problem on that page.

The fix

Content refreshing is the highest-ROI activity for recovering traffic lost to thin content. Take your worst-performing important pages one at a time and run a structured improvement process on each: expand coverage to address every subtopic the current top-ranking pages cover, add sourced statistics, add a clear author bio with credentials, add a FAQ section targeting People Also Ask queries for that keyword, and add or improve the schema markup. Update the published date after each improvement so Google sees the freshness signal.

The Claude AI SEO audit guide covers a complete content quality audit process you can run on any page in under 30 minutes using only free tools.

Reason 5: Technical Issues Are Blocking or Limiting Crawl

Technical SEO issues that block Google's ability to crawl and index your pages will suppress traffic across every page affected, not just individual posts. These issues often develop gradually and go unnoticed until they reach a threshold that triggers a noticeable drop.

The most common technical causes of traffic drops

A robots.txt change that blocked Googlebot. A single incorrect line in your robots.txt file can prevent Google from crawling entire sections of your site. If someone edited the robots.txt file recently, check it immediately by visiting yoursite.com/robots.txt and looking for Disallow rules that should not be there.

Noindex tags applied accidentally. A WordPress plugin, theme update, or CMS setting change can add noindex meta tags to pages that should be indexed. Check your most important pages using the URL Inspection tool in Google Search Console. If it shows "Noindex tag detected," that page has been removed from search results.

Redirect chain issues. When pages that received external links start redirecting through multiple hops before reaching the final URL, the ranking authority from those links is diluted. A page that redirects through three URLs before landing is passing significantly less authority than a clean single-hop redirect.

Core Web Vitals failures. Google uses Core Web Vitals as a ranking factor. A site that recently introduced a slow-loading widget, a large unoptimized image, or a layout-shifting element may see gradual ranking suppression on mobile devices specifically.

The fix

Crawl your site with Screaming Frog (free up to 500 URLs) and look for pages returning non-200 status codes, noindex tags on important pages, redirect chains longer than one hop, and missing canonical tags. Fix the highest-priority issues first, beginning with anything that blocks Googlebot or removes pages from the index. Then submit an updated sitemap to Google Search Console and request re-crawling of your most important affected pages.

Reason 6: Competitors Improved Their AI Visibility and Outranked You

Traffic drops do not always happen because something went wrong with your site. Sometimes your site stayed the same while competitors actively improved. In a search environment where GEO optimization and AI citation work is increasingly differentiating which sites get cited and recommended, a competitor who invested in AI search visibility over the past six months may simply be capturing searches that your site used to win.

This is particularly common in competitive B2B niches where one or two well-resourced competitors have invested in comprehensive topical coverage, AI-optimized content structure, and entity authority building. Their visibility in AI Overviews and AI Mode results is growing while yours has stayed flat, and the traffic gap between you is widening even though you have not lost any rankings in traditional search.

How to diagnose this cause

Search your most important target keywords in Google and note whether competitor pages have begun appearing inside AI Overviews where they did not before. Also search those keywords in Perplexity and ChatGPT and check which sites are being cited in the AI-generated answers. If competitors appear consistently in AI citations and your site does not, you are losing AI-era search visibility even if your traditional rankings look stable.

The fix

The fix is systematic GEO and AEO optimization: restructuring your content to earn AI citations rather than purely to rank in traditional search results. The GEO and AEO optimization guide covers the full strategy for earning citations across Google AI Overviews, ChatGPT, Perplexity, and Claude. The short-term priority is the content on your most competitive pages: answer-first formatting, named statistics, entity consistency, and FAQPage schema.

Reason 7: Seasonal Patterns You Are Misreading as a Real Drop

Many website owners see a traffic drop and immediately assume something is broken, when the real cause is a predictable seasonal pattern they have not tracked before. B2B sites see regular traffic drops during holiday periods, summer months, and the first two weeks of January. E-commerce sites see sharp drops immediately after peak shopping seasons. SaaS sites see drops in August when decision-makers are on vacation.

How to diagnose this cause

In Google Search Console, change the date range comparison from "previous period" to "compare to the same period last year." If your current traffic matches or exceeds last year's equivalent period, you do not have a real drop. You have a seasonal cycle. If your traffic is genuinely below the same period last year for three or more consecutive weeks, seasonal patterns alone do not explain it and one of the other reasons in this guide is likely involved.

Look also at the 16-month trend view in GSC, which shows the full annual cycle. If the current dip matches the shape and timing of last year's dip, it is seasonal. If it is deeper or started earlier, something changed.

The 7-Step Traffic Recovery Checklist

Once you have identified which reason or combination of reasons applies to your situation, use this checklist to work through the recovery actions in the correct order. Sequence matters. Fixing technical issues before improving content quality means your improved content gets crawled faster. Improving content quality before requesting AI citation optimization means the content being cited is already strong.

- Verify your tracking is accurate. Check GA4 tag firing in Realtime. Compare GA4 clicks against GSC clicks for the same date range. If they diverge significantly, fix the tracking before doing anything else.

- Identify the drop pattern in GSC. Was it a sudden drop on a specific date (likely algorithm update or technical issue) or a gradual slide over weeks (likely AI Overview click absorption or thin content)? The pattern shapes the diagnosis.

- Fix any technical blockers first. Crawl with Screaming Frog. Check robots.txt. Check for noindex tags. Fix any redirect chains. Submit updated sitemap. None of the content improvements below matter if Google cannot crawl your pages.

- Identify your top 10 pages by traffic loss. In GSC, compare the last 28 days to the previous 28 days and sort pages by click change. Focus your recovery effort on the 10 pages that lost the most traffic, not on new content creation.

- Refresh the highest-priority pages. For each page: expand coverage depth, add author credentials, add named statistics with sources, add or improve the FAQ section, add FAQPage schema. Update the published date. Re-submit the URL in GSC for indexing.

- Optimize your most important pages for AI citation. Use the AI Overviews citation guide to restructure the top 5 pages for passage-level extraction, answer-first formatting, and entity authority signals.

- Monitor weekly for 60 days. Set a weekly GSC review reminder. Track impressions and clicks for each page you improved. Early signals of recovery typically appear within 2 to 4 weeks of content improvements. Full recovery for algorithm update cases typically takes 60 to 90 days.

When to Fix It Yourself vs Hire a Consultant

The 7-step checklist above is executable by any site owner with basic CMS access and 3 to 5 hours per week. If your drop is driven by one or two of the reasons in this guide and your site is under 50 pages, self-directed recovery is realistic and the free tools available in 2026 (Claude, Screaming Frog, GSC) make the diagnostic and improvement work significantly faster than it used to be.

There are three situations where a consultant delivers faster and more reliable recovery than self-directed work:

The cause is unclear after two weeks of investigation. If you have worked through this diagnostic framework and still cannot identify which of the seven reasons applies, the issue is likely a combination of factors or something in your specific competitive environment that requires outside perspective with experience in your niche.

Your site has more than 50 pages and the drop is widespread. Refreshing 5 pages is a weekend project. Diagnosing and refreshing 40 pages with different traffic patterns, while also managing technical fixes and AI citation optimization, is a sustained three-to-four-month engagement. At that scale, the risk of misdiagnosing the cause or deprioritizing the wrong pages is high without someone who has done it before.

The traffic loss is costing you meaningful revenue on a timeline. Self-directed recovery produces results over 60 to 90 days. If the traffic drop is affecting a business-critical conversion flow and you need faster results, professional implementation of the recovery strategy compresses that timeline significantly.

For US businesses that need professional support with traffic recovery, the SEO consulting service covers diagnostic, strategy, and implementation with a direct focus on 2026 AI-era search visibility.

Related Resources

- Google AI Mode Changed SEO: What to Do Now, the full strategy guide for adapting to AI Mode and recovering traffic in the new search landscape.

- How to Get Cited in Google AI Overviews, step-by-step guide for getting your content inside AI-generated answers rather than losing clicks to them.

- GEO and AEO Optimization: The Complete Guide, the full framework for earning citations across Google AI Overviews, ChatGPT, Perplexity, and Claude.

- How to Use Claude AI for a Complete SEO Audit, the free tool workflow for running a full content quality and technical audit in under 90 minutes.

Leave a Comment

Your email address will not be published. Comments are moderated before appearing.